Introducing STATE-Bench: A benchmark for AI agent memory

STATE-Bench is an open-source, memory-agnostic benchmark freely available to agent developers, researchers, and platform teams.

Cloud architects have long faced a fundamental trade-off: hardware-level security, extremely fast cold starts, and broad application compatibility. Choose any two.

The Cloud Native Computing Foundation’s (CNCF) Hyperlight project delivers faster, more secure, and smaller workload execution to the cloud-native ecosystem—achieving hardware isolation with extremely fast cold starts by eliminating the operating system entirely. The challenge: without system calls, applications must be specially written for Hyperlight’s bare-metal environment.

Hyperlight and the Nanvix microkernel project have now combined efforts to solve this final constraint. By adding a POSIX compatibility layer, the integration enables Python, JavaScript, C, C++, and Rust applications to run with full hardware isolation and extremely rapid cold starts—much closer to meeting all three requirements.

This post explains the serverless trilemma and walks through how we attempt to break through it.

When building serverless infrastructure, architects have traditionally faced a painful trade-off. You can have any two of the following, but not all three:

With Hyperlight, we showed that it’s possible to create micro-VMs in low tens of milliseconds by eliminating the OS and virtual devices. But this speed came at a cost: Hyperlight guests have no system calls available. Instead, they’re statically linked binaries that communicate only through explicit host-guest function calls. That’s secure and fast, but it limits what applications you can run.

Nanvix is a Rust-based microkernel created by the Systems Research Group at Microsoft Research. Unlike traditional OSes, Nanvix was co-designed with Hyperlight from the ground up. It’s not a general-purpose OS—it’s a minimal OS tailored specifically for ephemeral serverless workloads. Here are some highlights of Nanvix:

The result? You can now run real applications—with file systems, system calls, and language runtimes—inside a Hyperlight micro-VM, while maintaining hypervisor-grade isolation and achieving double-digit-millisecond cold starts.

The combination of Hyperlight and Nanvix addresses the trilemma by splitting responsibilities. Hyperlight controls everything the guest VM can do on behalf of the trusted host, providing hardware-enforced isolation. Nanvix’s optimized microkernel runs inside the Hyperlight guest, providing the POSIX system calls and file system int erface that applications expect. Together, they enable hardware-isolated execution of Python, JavaScript, C, C++, and Rust applications with double-digit millisecond-order cold starts.

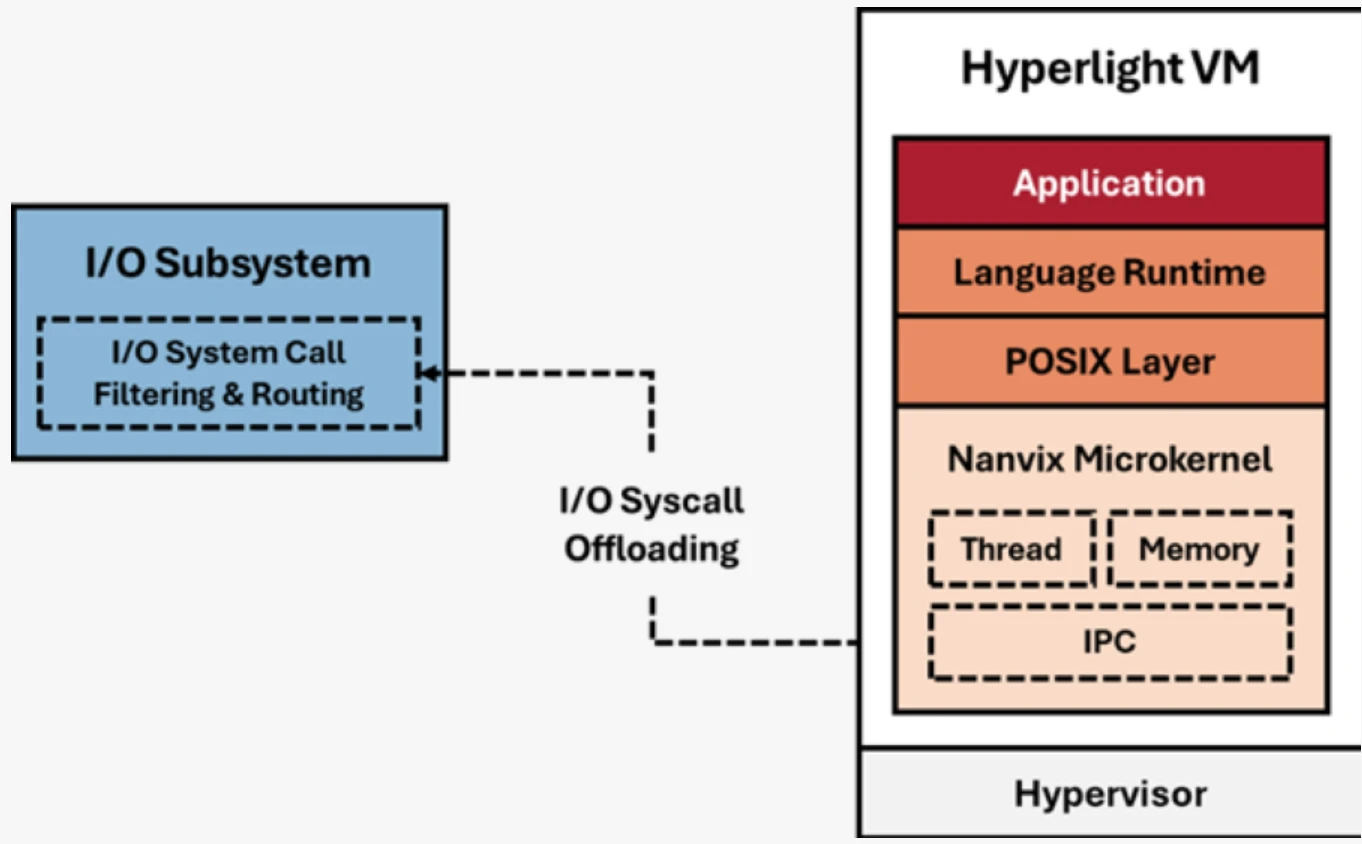

The magic of Hyperlight-Nanvix lies in its split OS design. Rather than running a monolithic OS inside the VM, it splits responsibilities between two groups of components:

This split architecture means we get the best of both worlds. Applications see a familiar POSIX environment, but the actual I/O operations are handled by the host—enabling high density, fast cold starts, and shared state across invocations when needed.

One of the most powerful features of the Hyperlight-Nanvix integration is system call interposition. When a guest application makes a system call (like openat to open a file), the request flows through Nanvix, across the VM boundary via Hyperlight’s VM exit mechanism, and to the host. At this boundary, the host can:

This gives you fine-grained control over what untrusted code can do, even when that code expects a full OS underneath it. Want to allow file reads but block network access? You can enforce that at the system call level without modifying the guest application.

Hyperlight-Nanvix delivers all three requirements—hardware isolation, fast cold starts, and application compatibility. But different use cases have different security requirements. The integration supports three deployment architectures that let you optimize for your specific threat model.

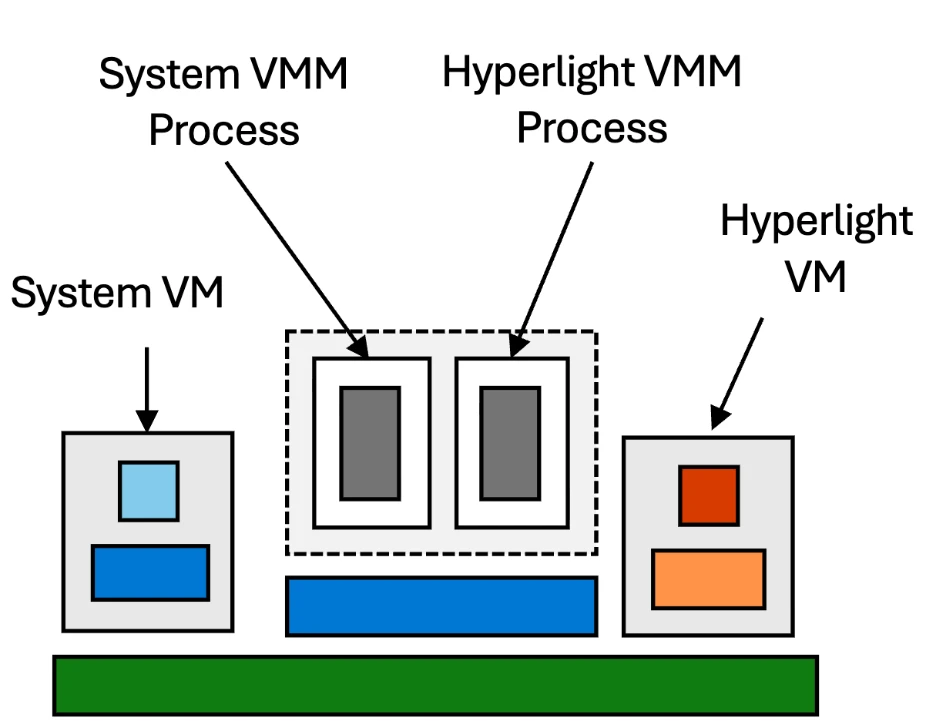

Across all three modes, the Hyperlight VM remains identical. What changes is how the I/O subsystem is deployed: whether it runs in the same process as the VMM, in a separate process, or in a separate VM entirely. Each architecture offers different trade-offs between isolation strength, performance, and resource density.

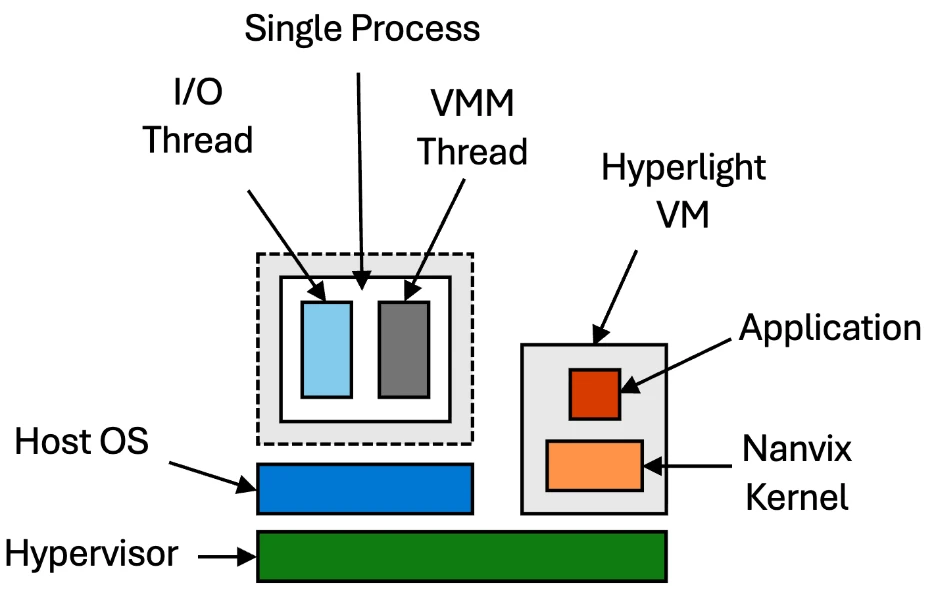

The simplest deployment model runs the I/O subsystem and the Hyperlight VMM in a single host process. The VMM thread manages the Hyperlight VM, while an I/O thread handles system call interposition. This provides the same threat model as Hyperlight without Nanvix—fast and simple, ideal for running small, untrusted workloads with hardware isolation.

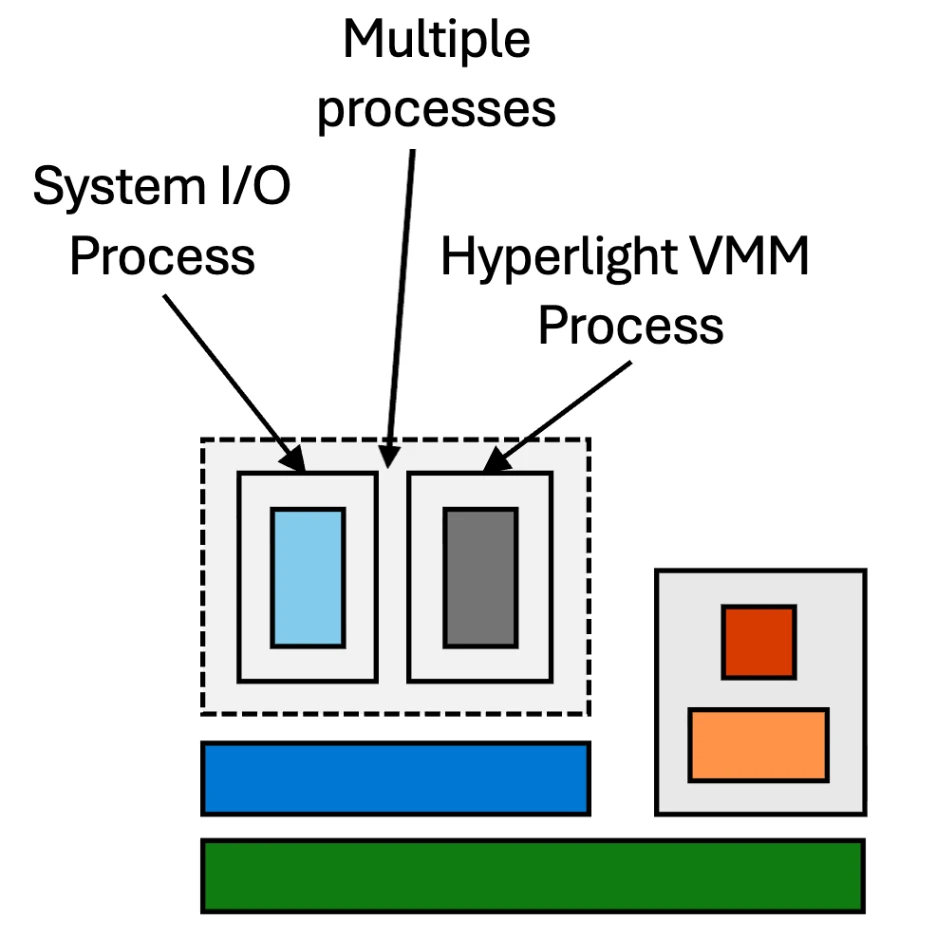

For improved isolation, you can separate the VMM and I/O handling into different host processes. The VMM process manages the Hyperlight VM, while a separate system process handles I/O operations. This constrains the blast radius if a vulnerability is exploited—an attacker who escapes the VM still can’t access I/O resources directly. The system I/O process can also be shared across multiple concurrent instances for the same tenant, improving both density and deployment time.

The most isolated deployment runs two separate VMs on the host hypervisor (using Hyper-V or KVM). The System VM handles all I/O system calls and can serve as a shared backend for multiple Hyperlight VMs. The Hyperlight VM forwards I/O requests across the hypervisor boundary to the System VM, providing defense-in-depth with multiple hypervisor boundaries.

We’ve tested Hyperlight-Nanvix against other isolation technologies using real-world applications. Our early benchmarks show very promising results:

How far did we get to breaking the trilemma? We’re preparing a detailed benchmark analysis that we’ll share in a follow-up post, including methodology, reproducible test cases, and comparative data across different workloads. This will give you the data and tests you need to decide for yourself.

Because Hyperlight-Nanvix provides a POSIX compatibility layer, you can run applications in virtually any language, among them:

The key insight is that language runtimes themselves are just applications. By providing system calls and a file system, Nanvix enables you to embed interpreters like QuickJS or CPython inside the micro-VM. Your JavaScript or Python code runs normally—it has no idea it’s executing inside a hardware-isolated sandbox.

This approach also explains why Hyperlight-Nanvix achieves better performance than general-purpose operating systems: Nanvix is optimized for workloads you want to spin up, execute, and tear down as quickly as possible—the exact pattern cloud-native serverless functions demand.

One compelling use case for Hyperlight-Nanvix is executing AI-generated code. As large language models become more capable of writing code, we need secure environments to run that code without risking our infrastructure.

AI-generated code should be treated as untrusted and potentially malicious. With Hyperlight-Nanvix, you can:

The hypervisor boundary means that even if the generated code contains an exploit targeting the language runtime, the attacker still faces a hardware-enforced wall. And because cold starts are so fast, you can afford to create a fresh VM for every code execution—no need to reuse potentially compromised sandboxes.

The hyperlight-nanvix wrapper provides out-of-the-box support for running JavaScript, Python, C, and C++ programs inside Nanvix guests.

git clone https://github.com/hyperlight-dev/hyperlight-nanvix

cd hyperlight-nanvix

# Download the Nanvix toolchain and runtime

cargo run -- --setup-registry

# Run scripts directly

cargo run -- guest-examples/hello.js # JavaScript

cargo run -- guest-examples/hello.py # Python

For C and C++ programs, you’ll need to compile them first using the Nanvix toolchain (via Docker). See the repository README for compilation instructions.

use hyperlight_nanvix::{Sandbox, RuntimeConfig};

#[tokio::main]

async fn main() -> anyhow::Result<()> {

let config = RuntimeConfig::new()

.with_log_directory("/tmp/hyperlight-nanvix")

.with_tmp_directory("/tmp/hyperlight-nanvix");

let mut sandbox = Sandbox::new(config)?;

// Works with any supported file type

sandbox.run("guest-examples/hello.js").await?;

sandbox.run("guest-examples/hello.py").await?;

sandbox.run("guest-examples/hello-c").await?;

Ok(())

}

use hyperlight_nanvix::{Sandbox, RuntimeConfig, SyscallTable, SyscallAction};

unsafe fn custom_openat(

_state: &(),

dirfd: i32,

pathname: *const i8,

flags: i32,

mode: u32,

) -> i32 {

println!("Intercepted openat call - auditing file access");

// Forward to actual system call or block based on policy

libc::openat(dirfd, pathname, flags, mode)

}

#[tokio::main]

async fn main() -> anyhow::Result<()> {

let mut system_call_table = SyscallTable::new(());

system_call_table.openat = SyscallAction::Forward(custom_openat);

let config = RuntimeConfig::new()

.with_system_call_table(Arc::new(system_call_table));

let mut sandbox = Sandbox::new(config)?;

sandbox.run("guest-examples/hello-c").await?;

Ok(())

}

The repository also includes a Node.js/NAPI binding, allowing you to create sandboxes directly from JavaScript. Check out examples/ai-generated-scripts/ for a complete example of safely executing AI-generated code. This example requires additional setup—see the README in that directory.

Hyperlight is a CNCF Sandbox project, and we’re excited to see the community build on this foundation. The integration with Nanvix represents the next step in our vision: making hardware-isolated serverless execution practical for real-world applications.