Continuing the story of early DOS development

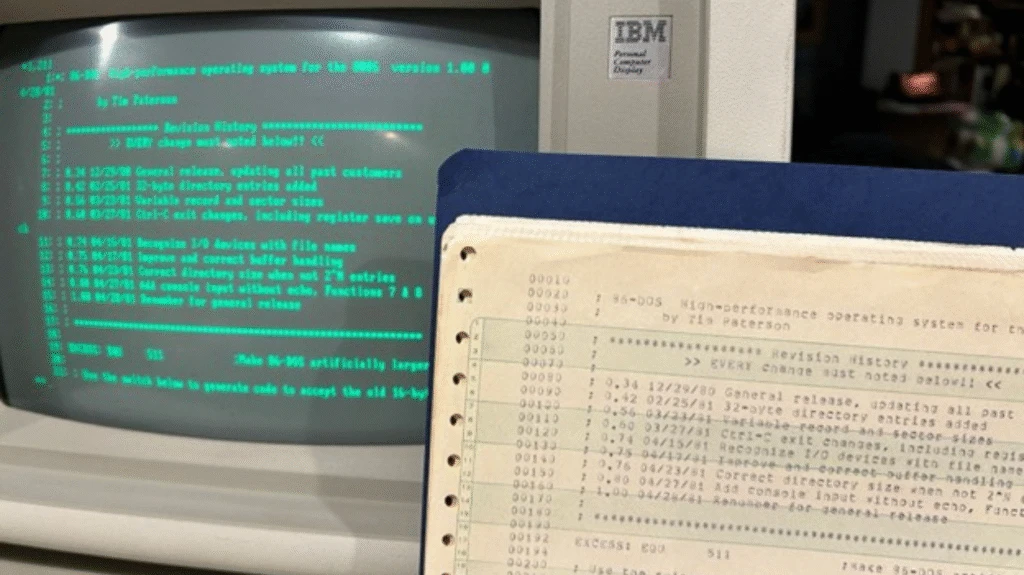

In 2018 we (re)-open-sourced MS‑DOS 1.25 and 2.11, and more recently in 2024 we were able to make the source for MS‑DOS 4.0 available to the public as well. Today, on 86-DOS 1.00’s 45th anniversary, we’re continuing that tradition with the earliest DOS source code discovered to date.